Apple has introduced an expansive list of the latest features designed to assist parents in protecting their children from potentially explicit and harmful material, also reducing the chances that a predator could also be ready to contact a toddler with dangerous materials. The new features cover Messages, Siri, and Search, offering devices-based AI analysis of incoming and outgoing images.

Protecting young users

The new child safety features specialize in three specific areas, Apple explained in an announcement today, noting that it developed these new tools with experts on child safety. The three areas involve the company’s Messages platform, utilizing cryptography to limit Child sexual assault Material (CSAM) without violating the user’s privacy, and offering expanded help and resources if ‘unsafe situations’ are encountered through Siri or Search.

“This program is ambitious, and protecting children is a crucial responsibility,” Apple said as a part of its announcement, explaining that it plans to ‘evolve and expand’ its efforts over time.

Messaging tools for families

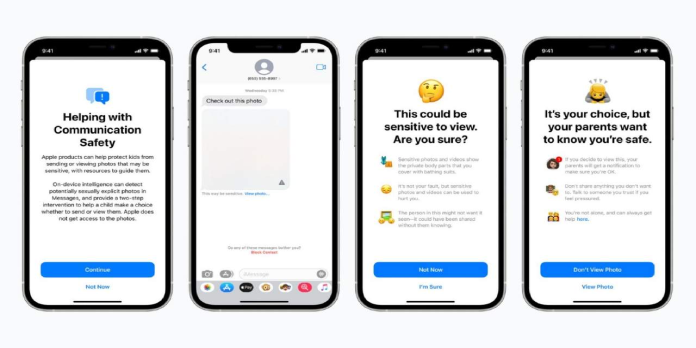

Once the new features are made available, Apple says its Messages app will add new tools that warn parents and youngsters when sexually explicit images are sent or received. For example, the content will automatically be blurred if a toddler gets a picture flagged as evident. Therefore, the child will instead tend a warning about what they’ll see if they click on the attachment, as well as resources to assist them in affecting it.

Likewise, suppose Apple’s on-device AI-based image analysis determines that a toddler could also be sending a sexually explicit image. In that case, Messages will first show a warning before the content is shipped. Because the image analysis takes place on the device, Apple won’t access the particular content.

Apple explains that oldsters will choose to receive an alert if their child decides to click on and consider a flagged image. When their child sends a picture, the AI is detected as potentially sexually explicit. The features are going to be available to accounts that were found out as families.

Using AI to detect while protecting privacy

Building upon that, Apple is additionally targeting CSAM images stored during a user’s iCloud Photos account using “new technology” arriving in future iPadOS and iOS updates. The system will involve known CSAM images and enable Apple to report these users to the National Center for Missing and Exploited Children.

To detect these images without violating the user’s privacy, Apple explains that it won’t analyze cloud-stored images. Instead, the corporate will use CSAM image hashes from the NCMEC and similar organizations to conduct on-device matching.

The “unreadable set of hashes” are stored locally on the user’s device. For it to figure, Apple explains that its system will undergo an identical process using this database before a picture is uploaded from the user’s device to iCloud Photos.

Apple elaborates on the technology behind this, happening to explain:

This matching process is powered by a cryptographic technology called private set intersection, which determines if there’s a match without revealing the result. The device creates a cryptographic safety voucher that encodes the match result alongside additional encrypted data about the image. This voucher is uploaded to iCloud Photos alongside the picture.

That system is paired with threshold secret sharing, meaning that Apple can’t interpret the security vouchers’ content unless the account crosses a threshold of “known CSAM content.” In addition, Apple says the system is highly accurate, meaning there would be a but one-in-one-trillion chance annually that an account could also be mistakenly flagged.

Assuming an account does exceed this threshold, Apple will then be ready to interpret the security vouchers’ content, manually review each report for confirmation that it had been accurate, then disable the account and send the message off to the NCMEC. An appeals option will be available for users who believe an error was made that led to the account being disabled.

Siri’s here to help

Finally, the new features also are coming to look and Siri. During this case, parents and youngsters will see expanded guidance within the sort of additional resources. This may include, for instance, the power to ask Siri the way to report CSAM content they’ll have discovered.

Beyond that, both products also will soon intervene in cases where a user searches or asks for content associated with CSAM, consistent with Apple. “These interventions will inform users that interest during this topic is harmful and problematic, and supply resources from partners to urge help with this issue,” the corporate explains.

Wrap-up

The new features are designed to address the growing need for shielding young users from the Internet’s vast array of harmful content while also enabling them to securely leverage the technology for education, entertainment, and communications. The technology may help reassure parents who would rather be concerned about letting their children use these devices.

Apple says its new child safety features will be available to users with iOS 15, iPadOS 15, watchOS 8, and macOS Monterey updates scheduled for release later this year.